Monocular Depth Studio

An ongoing experiment built to process live or recorded video into monocular depth, pose, face mesh and other machine-vision layers, treating them as material for a different kind of editing workflow.

What I was testing

A studio for machine-vision video treatment.

Processing live webcam input or uploaded clips to generate monocular depth maps as an editable visual layer.

Combining depth, pose estimation, hands and face mesh into a single space for image treatment.

Exploring how those signals can support a different kind of video editing workflow.

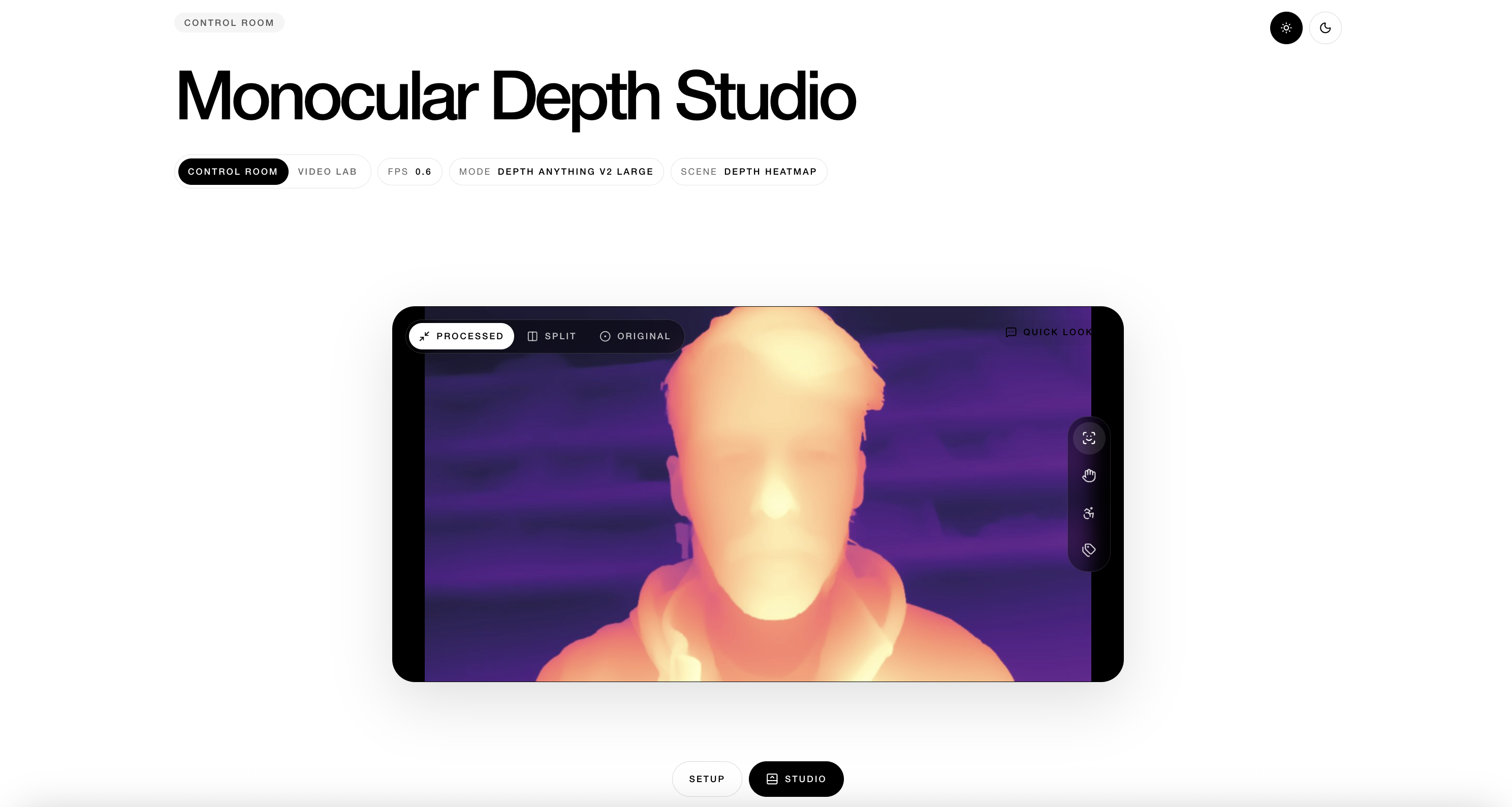

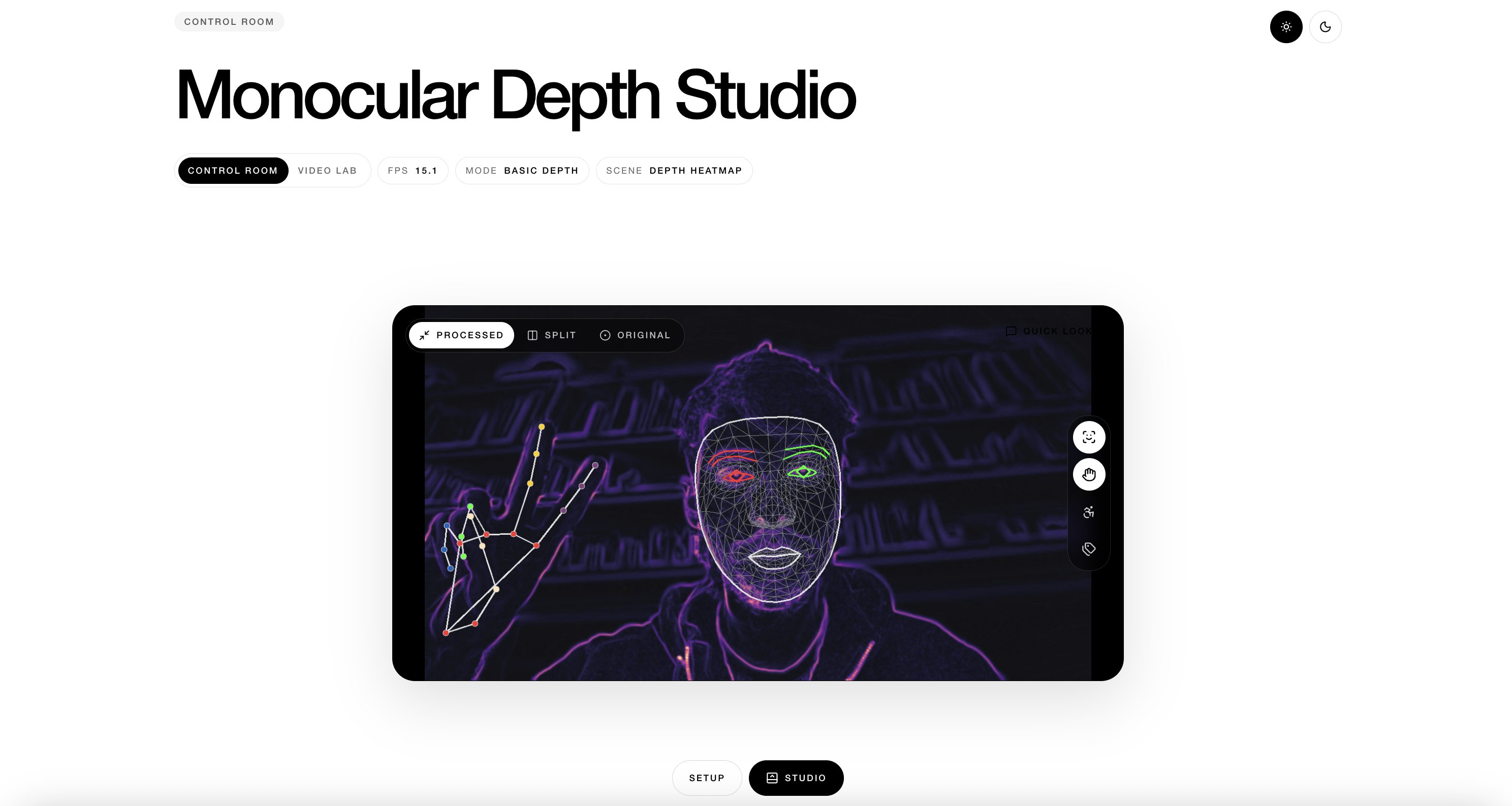

Control Room

A live workspace for webcam input, depth estimation and immediate visual feedback through scenes, finishes and overlays.

Machine vision layers

Depth maps, pose landmarks, hand tracking and face mesh can be combined as parallel layers, each one treated as editable image material.

Video Lab

A parallel flow for uploaded clips, quick previews and full exports, useful for testing whether the same processing logic holds up beyond live capture.

Alternative editor logic

Instead of centering the experience on cuts and tracks, the tool focuses on transforming perception signals into visual treatments through adjustable parameters and scene behaviors.